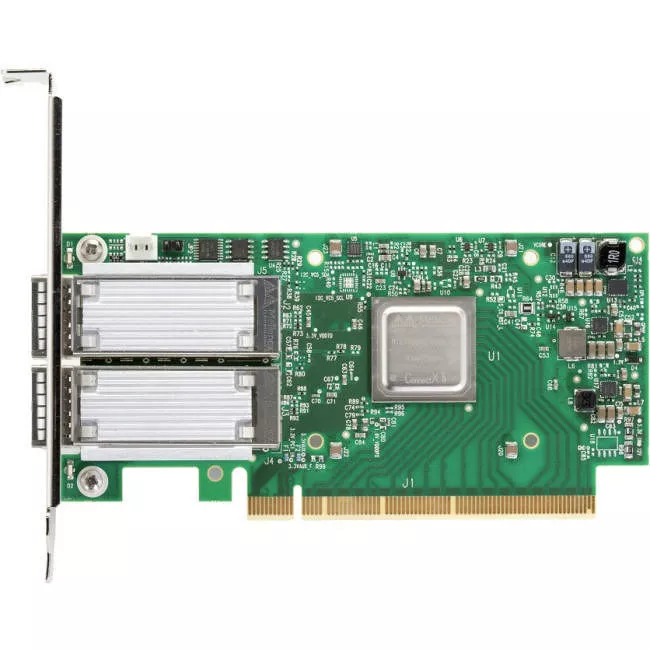

Mellanox MCX516A-GCAT ConnectX-5 EN Network Card - PCIe 3.0 x16 - 50 GbE - 2x Port - QSFP28

SabrePC B2B Account Services

Save instantly and shop with assurance knowing that you have a dedicated account team a phone call or email away to help answer any of your questions with a B2B account.

- Business-Only Pricing

- Personalized Quotes

- Fast Delivery

- Products and Support

$779.83

Mellanox MCX516A-GCAT ConnectX-5 EN Network Card - PCIe 3.0 x16 - 50 GbE - 2x Port - QSFP28

ConnectX-5 EN supports two ports of 100Gb Ethernet connectivity, while delivering low sub-600ns latency, extremely high message rates, PCIe switch and NVMe over Fabric offloads. ConnectX-5 providing the highest performance and most flexible solution for the most demanding applications and markets: Machine Learning, Data Analytics, and more.

HPC ENVIRONMENTS

ConnectX-5 delivers high bandwidth, low latency, and high computation efficiency for high performance,data intensive and scalable compute and storage platforms. ConnectX-5 offers enhancements to HPC infrastructures by providing MPI and SHMEM/PGAS and Rendezvous Tag Matching offload, hardware support for out-of-order RDMA Write and Read operations, as well as additional Network Atomic and PCIe Atomic operations support.

ConnectX-5 EN utilizes RoCE (RDMA over Converged Ethernet) technology, delivering low-latency and high performance. ConnectX-5 enhances RDMA network capabilities by completing the Switch Adaptive-Routing capabilities and supporting data delivered out-of-order, while maintaining ordered completion semantics, providing multipath reliability and efficient support for all network topologies including DragonFly and DragonFly+.

ConnectX-5 also supports Burst Buffer offload for background checkpointing without interfering in the main CPU operations, and the innovative transport service Dynamic Connected Transport (DCT) to ensure extreme scalability for compute and storage systems.

STORAGE ENVIRONMENTS

NVMe storage devices are gaining popularity, offering very fast storage access. The evolving NVMe over Fabric (NVMf) protocol leverages the RDMA connectivity for remote access. ConnectX-5 offers further enhancements by providing NVMf target offloads, enabling very efficient NVMe storage access with no CPU intervention, and thus improved performance and lower latency.

Moreover, the embedded PCIe switch enables customers to build standalone storage or Machine Learning appliances. As with the earlier generations of ConnectX adapters, standard block and file access protocols can leverage RoCE for high-performance storage access. A consolidated compute and storage network achieves significant cost-performance advantages over multi-fabric networks.

ConnectX-5 enables an innovative storage rack design, Host Chaining, by which different servers can interconnect directly without involving the Top of the Rack (ToR) switch. Alternatively, the Multi-Host technology that was first introduced with ConnectX-4 can be used. Mellanox Multi-Host™ technology, when enabled, allows multiple hosts to be connected into a single adapter by separating the PCIe interface into multiple and independent interfaces. With the various new rack design alternatives, ConnectX-5 lowers the total cost of ownership (TCO) in the data center by reducing CAPEX (cables, NICs, and switch port expenses), and by reducing OPEX by cutting down on switch port management and overall power usage.

CLOUD AND WEB2.0 ENVIRONMENTS

Cloud and Web2.0 customers that are developing their platforms on Software Defined Network (SDN) environments are leveraging their servers' Operating System Virtual-Switching capabilities to enable maximum flexibility.

Open V-Switch (OVS) is an example of a virtual switch that allows Virtual Machines to communicate with each other and with the outside world. A virtual switch traditionally resides in the hypervisor and switching is based on twelve-tuple matching on flows. The virtual switch or virtual router software-based solution is CPU intensive, affecting system performance and preventing fully utilizing available bandwidth.